Generative AI for Peer-Programming, Testing and Code Review

Improving Software Development Productivity

Feb 16, 2026

Research Introduction

By: Andre Nunes

The rapid advancement of Generative Artificial Intelligence has begun to reshape contemporary software engineering practices, particularly in the areas of peer programming, software testing, and code review. Large Language Models are now capable of generating executable code, explaining complex logic, producing automated tests, and supporting developers during review activities. While these capabilities are widely discussed in both industry and academia, much of the existing evidence remains anecdotal or focused on limited experimental settings. This article presents partial findings from an ongoing research project investigating the effectiveness of Generative AI as a peer programming assistant within real-world software development environments. The primary aim is to share early empirical results, reflect on observed productivity and quality improvements, and outline key methodological learnings emerging from the study so far. The broader research seeks to contribute data-driven insights into how AI-assisted development influences efficiency, code quality, and collaboration when compared with traditional human-only approaches.

What is a Large Language Model?

A Large Language Model is a class of machine learning model designed to understand and generate human-like language by learning statistical patterns from vast corpora of text. These models are typically based on transformer architectures and are trained using self-supervised learning techniques, such as next-token prediction. By exposing the model to large-scale textual datasets, it learns grammatical structures, semantic relationships, and contextual dependencies within language. When applied to software engineering, Large Language Models extend beyond natural language to programming languages, enabling them to generate code, explain algorithms, and reason about software behaviour. Rather than encoding explicit rules, they operate probabilistically, predicting likely sequences of tokens based on context, which allows them to adapt flexibly across different tasks and domains.

Natural Language Capability in the Context of AI

Natural language capability refers to an AI system’s ability to interpret, reason over, and generate human language in a way that preserves meaning, intent, and contextual relevance. In the context of Generative AI, this capability is foundational, as it enables developers to interact with the system using conversational prompts rather than rigid programming interfaces. For software development tasks, natural language acts as a bridge between human intent and machine execution. Developers can describe requirements, constraints, or desired behaviours in plain language, and the AI system translates those instructions into code, tests, or explanations. This shift significantly lowers the cognitive and technical barriers to interacting with complex systems and enables a more collaborative, dialogue-driven development process.

What is Generative AI?

Generative AI refers to a category of artificial intelligence systems capable of producing new content, including text, code, images, audio, or other data modalities, based on learned patterns from training data. Unlike traditional analytical AI systems that focus on classification or prediction, Generative AI creates original outputs that resemble human-produced artefacts. From a systems perspective, Generative AI extends beyond individual models to include supporting components such as prompt processing, contextual memory, external tools, and retrieval mechanisms. In software engineering, Generative AI is increasingly used to generate source code, automate documentation, create test cases, and assist with debugging. These systems operate as socio-technical artefacts, augmenting human capabilities rather than fully replacing developer decision-making.

AI Code Generation and Developer Productivity

One of the most frequently cited benefits of Generative AI in software development is improved productivity. By automating repetitive tasks, accelerating initial code creation, and providing instant feedback, AI-assisted tools can reduce the time required to implement features and resolve defects. In practice, AI can rapidly scaffold solutions, propose alternative implementations, and suggest improvements that would otherwise require manual research or peer consultation. However, productivity gains are not solely a function of speed. They also depend on the quality of generated code, the effort required to review and validate outputs, and the extent to which AI-generated artefacts align with existing architectural and coding standards. Understanding productivity therefore requires a holistic view of the development lifecycle rather than a narrow focus on coding speed alone.

Challenges in Evaluating AI-Generated Code

Evaluating AI-generated code presents methodological and practical challenges. While functional correctness can often be assessed through compilation and testing, attributes such as readability, maintainability, and architectural consistency are more subjective and context-dependent. AI-generated code may appear syntactically correct and functionally valid, yet still diverge from established design principles or introduce subtle technical debt. Furthermore, because Generative AI systems rely on probabilistic inference, identical prompts can yield different outputs, complicating reproducibility and benchmarking. These factors necessitate careful experimental design and the use of both quantitative metrics and qualitative assessments when evaluating AI-assisted development.

Readability and Maintainability

Readability and maintainability are critical indicators of long-term software quality. Code that is difficult to understand or modify can erode productivity gains achieved during initial development. Early observations from the research indicate that AI-generated code often demonstrates strong structural consistency but may require refinement to improve naming conventions, modularisation, and alignment with project-specific standards. Developers remain essential in shaping the final output, reviewing AI-generated suggestions, and ensuring that the codebase remains coherent over time. The role of the AI, in this context, is best understood as an accelerator and collaborator rather than an autonomous author.

Security and Ethical Concerns

The use of Generative AI in software development raises important security and ethical considerations. AI-generated code can inadvertently introduce vulnerabilities, particularly when prompts lack sufficient constraints or contextual guidance. There are also concerns related to intellectual property, data provenance, and the potential reproduction of insecure patterns present in training data. From an ethical standpoint, over-reliance on AI may reduce opportunities for skill development among junior developers if not carefully managed. Addressing these risks requires robust governance, clear usage policies, and the integration of security and quality checks throughout the development pipeline.

Human-AI Collaboration and Complementarity

A central theme emerging from both the literature and this research is the notion of complementarity between human developers and AI systems. Rather than replacing human expertise, Generative AI functions most effectively when it complements human judgment, creativity, and contextual awareness. In peer programming scenarios, the AI acts as a continuously available collaborator that can propose solutions, challenge assumptions, and provide alternative perspectives. The quality of this collaboration depends heavily on how developers frame their prompts, interpret AI responses, and integrate suggestions into their workflow. Effective human-AI collaboration emerges through iterative interaction rather than one-off instructions.

An Alternative AI Peer-Programming Tool

While much existing research focuses on GitHub-based tools, this study explores Generative AI usage within alternative development ecosystems, particularly GitLab-based environments. These platforms introduce distinct workflows, including integrated DevSecOps pipelines, merge request processes, and continuous integration practices, which influence how AI-assisted tools are applied in practice. By examining AI peer programming within this context, the research contributes to a more diverse and representative understanding of AI-assisted software development beyond a single dominant platform.

Partial Learnings and Outcome

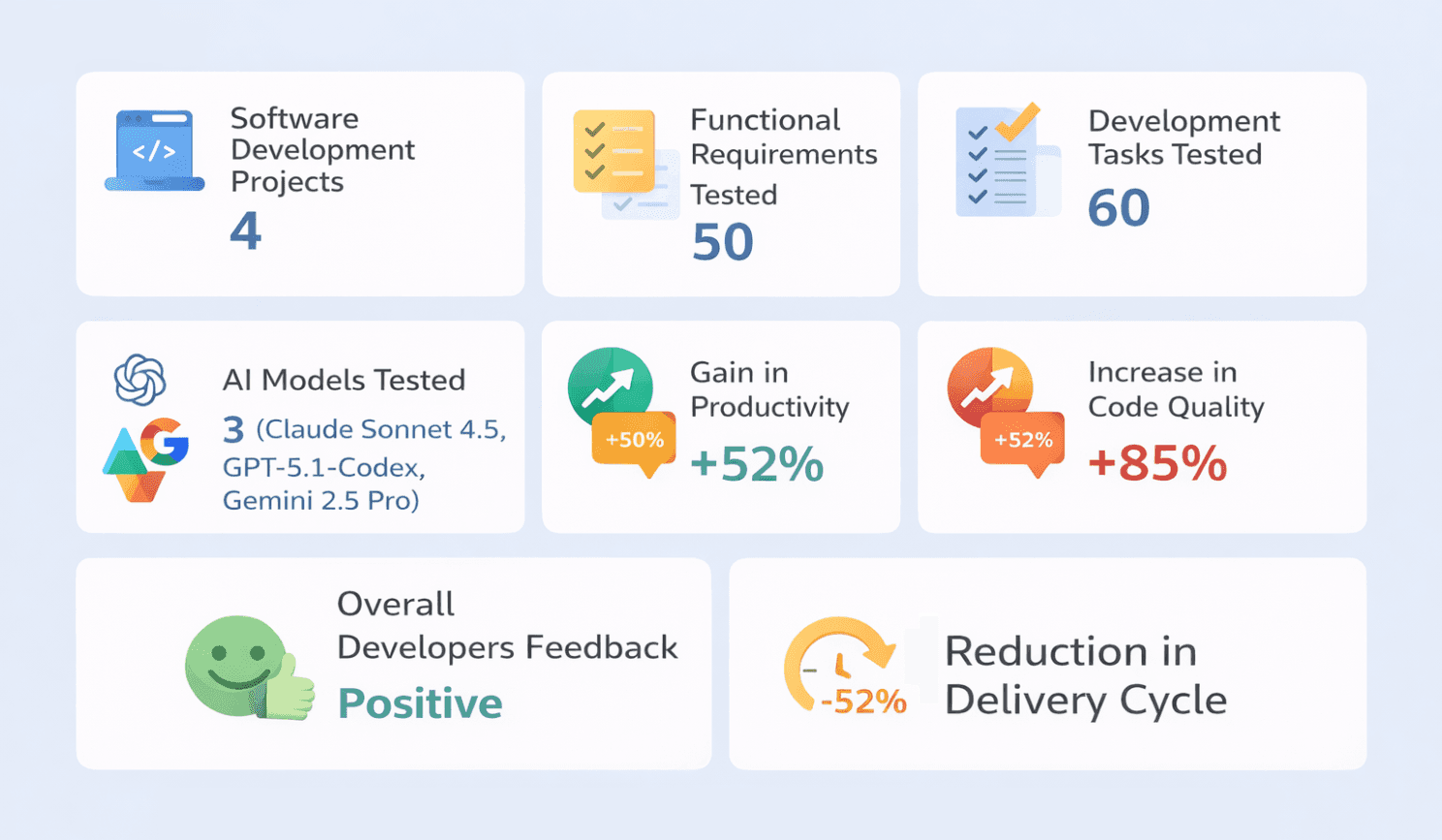

The research adopts a case study and mixed-methods approach to evaluate the effectiveness of Generative AI for peer programming, testing, and code review. To date, Generative AI has been applied across four (4) real-world software development projects, where it has been used to produce code, generate automated tests, and support code review activities. Over fifty (50) functional requirements and more than sixty (60) development tasks have been analysed so far while using three (3) AI models (Claude Sonnet 4.5, GPT-5.1-Codex, Gemini 2.5 Pro).

The preliminary results are very promising. The findings suggest a real productivity gain of over fifty percent (50%), a reduction in the overall delivery lifecycle of approximately fifty-two percent (52%), and an increase in measured code quality of around eighty-five percent (85%) when compared with human-only development approaches.

These results should be interpreted as partial and evolving. The study indicates that outcomes improve as teams gain experience with Generative AI and refine their development practices. In particular, improvements in requirements engineering, testing strategies, and code standards contribute directly to better AI-assisted outputs. Most importantly, the research highlights that prompt and context engineering are fundamental to effective interaction with Generative AI models.

As developers become more proficient in structuring prompts, providing relevant context, and iteratively refining instructions, the quality, reliability, and usefulness of AI-generated artefacts increase significantly. This learning curve suggests that the full potential of Generative AI in software development is realised not through tooling alone, but through the maturation of human practices that govern how these tools are used.

The Importance of Human in the Loop

Concept

The concept of Human in the Loop refers to the deliberate inclusion of human judgment, oversight, and decision-making within AI-driven systems. Rather than allowing an AI model to operate autonomously, Human in the Loop systems are designed so that humans guide, validate, correct, and refine AI outputs throughout the lifecycle of a task. In practice, this means that AI-generated artefacts are not treated as final or authoritative by default, but as suggestions or intermediate results that require human evaluation. This approach recognises that, while AI systems can process large volumes of information and generate outputs at speed, they lack true understanding of context, intent, organisational constraints, and ethical implications.

Importance

The importance of Human in the Loop in AI systems stems from the inherent limitations of Generative AI models. These models operate probabilistically and generate outputs based on patterns learned from historical data, not on an understanding of truth, correctness, or responsibility. As a result, AI systems may produce outputs that are syntactically plausible but semantically incorrect, insecure, or misaligned with real-world requirements. Human involvement is essential to detect errors, challenge assumptions, and apply domain knowledge that the model does not possess. Human oversight also plays a critical role in managing risks related to bias, security vulnerabilities, intellectual property, and ethical use, ensuring that AI-assisted outcomes remain trustworthy and accountable.

Coding and Peer Programming Context

In the context of coding and peer programming, Human in the Loop is particularly relevant because software development is not purely a mechanical activity. Writing code involves interpreting ambiguous requirements, making architectural trade-offs, balancing performance with maintainability, and aligning solutions with long-term business and technical strategies. While Generative AI can rapidly generate code snippets, tests, or refactoring suggestions, it cannot fully grasp the broader system context or the evolving intent behind a software product. Human developers must therefore remain responsible for validating logic, ensuring consistency with coding standards, and confirming that generated solutions are appropriate for the specific problem domain.

Within AI-assisted peer programming, the Human in the Loop model reframes the relationship between developers and AI. The AI acts as an always-available peer that proposes ideas, highlights alternatives, and accelerates routine tasks, while the human developer retains control over decision-making and quality assurance. This collaborative dynamic allows developers to focus more on higher-level reasoning, design, and problem-solving, while using AI to reduce cognitive load and execution effort. The research conducted so far reinforces that the most significant productivity and quality gains occur when developers actively engage with the AI, iteratively refine prompts, challenge outputs, and integrate suggestions thoughtfully rather than accepting them passively.

Ultimately, Human in the Loop is not a constraint on Generative AI but a key enabler of its effective use in software engineering. By embedding human oversight into AI-assisted coding and peer programming workflows, organisations can harness the speed and scalability of AI while preserving accountability, quality, and professional judgment. This balance is essential for sustainable adoption, ensuring that Generative AI strengthens, rather than undermines, the role of the software developer.